Cloud engineering skills are topping the list of 2023’s most in-demand tech skills, with 75% of tech leaders planning to build all their new products and features in the cloud.

This has led companies to increasingly adopt complex multi-cloud strategies. However, cloud technologies are advancing faster than the number of engineers to hire.

As the cloud skills gap has prompted Google alone to train more than 40 million people on cloud computing skills, finding and evaluating the right cloud engineer is more challenging than ever for talent teams.

That’s why we created this ultimate guide to cloud computing interview questions, covering seniority levels from basic to advanced and broken down by specialty.

And for the complete info on hiring a Cloud Engineer, including cloud computing terminology for recruiters, info on standard cloud titles, JD templates and exclusive hiring tips based on our Talent Graph of 10+ million engineering profiles in the US and Canada, you can check out our ebook “The Essential Hiring Guide for Cloud Engineers.”

Cloud engineer interview questions: the fundamentals

Try these cloud computing interview questions and answers to start easy and verify that a cloud engineer has the basics down.

#1: What is the cloud?

The cloud is a network of servers that are used to store, manage, and process data remotely rather than on a local server or personal computer. The cloud enables users to access information and applications anywhere, anytime, from any device with an Internet connection.

source: towardsdatascience.com

#2: Can you explain the difference between a private cloud, public cloud, hybrid cloud, and multi-cloud ecosystem?

Public clouds are owned and operated by third-party companies and made available online. Examples include Amazon Web Services (AWS), Microsoft Azure, and Google Cloud Platform. They allow companies to pay as they go for the computing resources they use for greater flexibility and scalability.

Private clouds are dedicated to a single organization and are usually located on-premises or in a data center owned by the same organization. Private clouds offer more control and security than public clouds.

Hybrid clouds combine public and private cloud services. Organizations can choose the best option for each application or workload while maintaining a unified computing environment. For example, specific applications may be run in a private cloud for security reasons, while less critical applications may be run in a public cloud for cost savings.

A multi-cloud environment combines at least two or more public clouds. The approach allows companies to take advantage of the strengths of different cloud platforms while avoiding vendor lock-in and reducing the risk of downtime. A successful multi-cloud strategy ensures visibility, interoperability, and security.

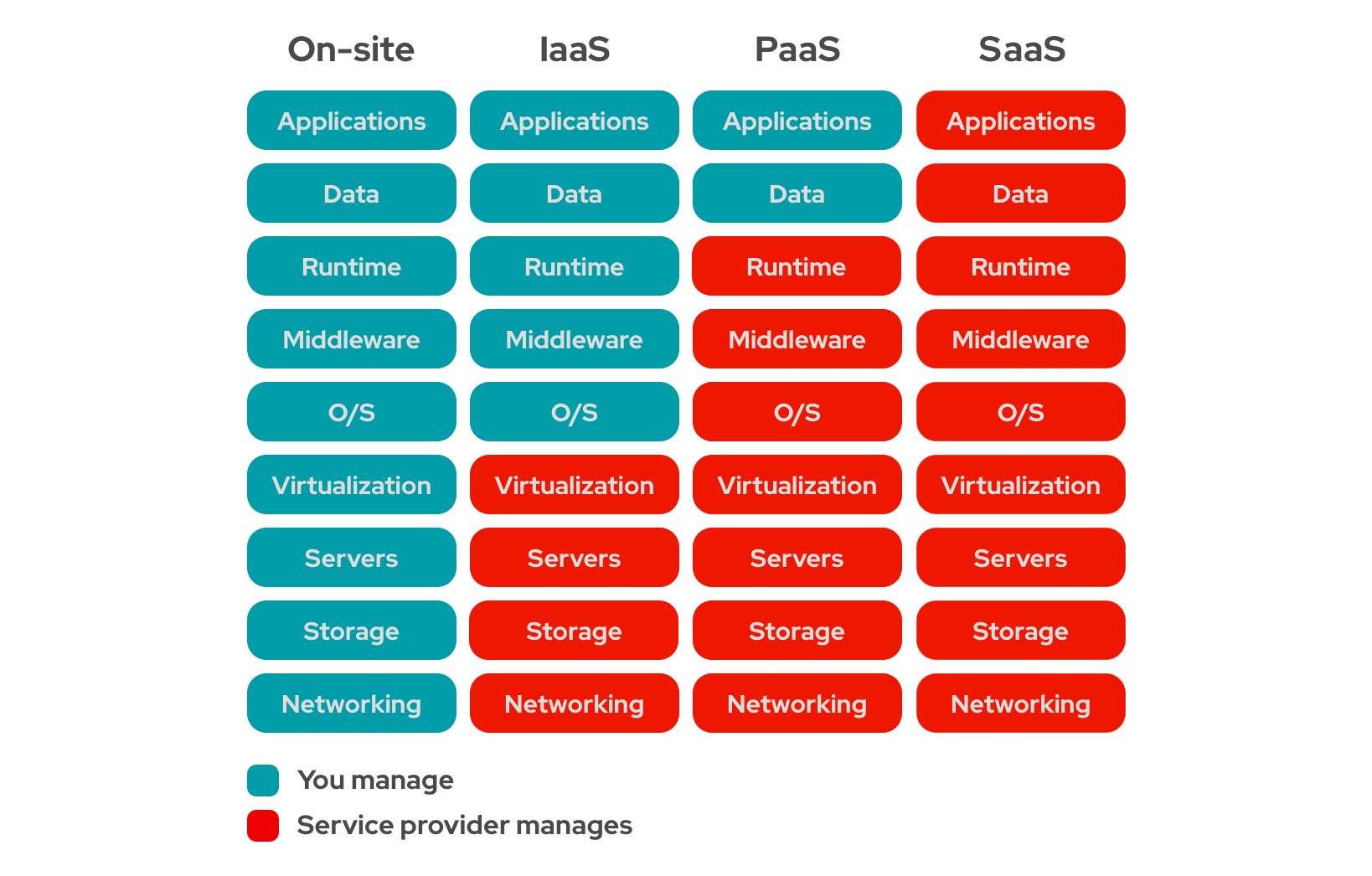

#3: What is the difference between IaaS, PaaS, and SaaS in cloud computing?

Infrastructure as a service (IaaS) provides computing resources such as servers, storage, and networking over the internet. Customers have control over the operating systems, storage, and deployed applications that run on infrastructure — but the provider manages the underlying infrastructure. With IaaS, companies no longer have to purchase, store and maintain their physical servers.

Some examples of IaaS are renting a virtual computer through Amazon’s EC2 or storage through Google Cloud Storage.

Platform as a service (PaaS) is a set of high-level services that allow developers to build and deploy applications. Platforms speed up software development by providing ready-made resources such as databases, search, messaging, firewalls, etc.

Some common examples of PaaS include AWS ElasticSearch, Google App Engine, Heroku, and Salesforce Lightning Platform.

Software as a service (SaaS) provides access to fully formed software applications over the internet, typically on a subscription basis. SaaS is meant for end users to use directly — the provider manages all aspects of the software in the background, including infrastructure, security, and maintenance.

Some examples of SaaS include Gmail, Salesforce, and Slack.

Source: redhat.com

#4: What is the difference between a Virtual Machine and a container?

A Virtual Machine (VM) is a software-based emulation of a computer system that allows multiple programs to be run on a computer as if they each had access to the entire computer. VMs provide a completely virtual environment, including virtualized hardware, operating system, storage, and network resources, that are isolated from the underlying physical infrastructure. VMs allow a single, powerful computer to be shared by many programs with their unique environments and resources.

A container, on the other hand, is a lightweight and standalone executable package of software that includes everything needed to run the application, including the code, runtime, system tools, libraries, and settings. Unlike VMs, containers share the host operating system but are isolated from each other at the application and process level. Operating systems are large, and making a copy for every VM uses many resources. As a result, containers are even better at helping to minimize unused computing capacity (2-3x more efficient).

#5: What is the difference between scalability and elasticity?

Scalability is the ability to add resources to a system or application to handle an increased load.

Elasticity is the ability of a system to scale capacity up and down in response to changes in demand.

Scalability and elasticity are critical features of cloud computing, which allow organizations to pay only for the computing resources they use and scale their infrastructure on demand as their needs continue to evolve.

#6: What are the key benefits of cloud computing?

Besides scalability and elasticity, the key benefits of cloud computing are:

- Cost savings: organizations can reduce capital expenditures and operating costs, as they only pay for the resources they consume on a pay-per-use basis rather than having to invest in and maintain expensive in-house infrastructure.

- Improved performance, availability, and security: cloud providers such as Google, Amazon, and Microsoft invest heavily in high-performance infrastructure designed to maximize uptime. They also employ security experts to monitor the cloud for issues and potential breaches.

- Increased agility and speed: organizations can quickly provision and deploy new applications and services without waiting for the procurement, installation, and configuration of new hardware.

- Disaster recovery and business continuity: reputable cloud providers have multiple data centers in different locations. As a result, even if a data center catastrophically fails, your data is unlikely to be lost.

- What are the pros and cons of serverless computing?

As containers are even more efficient than VMs at maximizing computing resources, serverless computing is newer and more efficient. Serverless services such as AWS Lambda allow users to upload simple functions (rather than a complete app or program). It is also known as FaaS or functions as a service.

The pros:

- Increased cost savings

- No server management is necessary

- Enhanced scalability and flexibility

- Reduced latency

The cons:

- Cold starts (functions can experience a delay when they start up after being idle, resulting in slower response times)

- Debugging complexity

- Vendor lock-in

- Security

Intermediate cloud computing interview questions

These cloud engineer interview questions dig into more specific cloud computing principles and strategies.

#1: Which cloud computing tools and skills have you used? Which are you the most experienced in?

While the answer to this question will vary depending on the specific cloud engineering role and individual background of the candidate, here are some of the most common cloud computing tools:

- Cloud provider tools are offered by major cloud providers for cloud engineering.

AWS’s most common cloud services include: Elastic Compute Cloud (EC2), Simple Storage Service (S3), Lambda, Relational Database Service

GCP’s most common cloud services include: Compute Engine, Cloud Storage, Cloud Functions, Cloud SQL

Azure’s more common services include: Virtual Machines, Blob Storage, Functions, Backup, SQL

- Infrastructure as Code (IaC) Tools allow cloud engineers to manage and provision cloud infrastructure using code rather than manual configuration. Examples: Terraform, CloudFormation

- Containerization tools enable cloud engineers to package, deploy, and manage containers and microservices. Examples: Docker, Kubernetes, OpenShift, AWS Elastic Container Service (ECS)

- Monitoring and logging tools provide real-time visibility into cloud resource performance and usage to diagnose and resolve issues. Examples: Amazon Cloud Watch, Google Cloud Operations, Datadog

- Configuration management Tools automate the provisioning and management of cloud resources, reducing manual effort and improving reliability. Examples: Ansible, Chef, Puppet, SaltStack (Salt)#2: Why should cloud applications be architected with microservices?

- Simplicity: each microservice serves a specific and limited purpose, simplifying the overall application development process.

- Scalability: microservices can be scaled independently, which allows organizations to scale different parts of their application as needed without affecting the entire system, and the right-size cloud infrastructure needs

- Resilience: since microservices are deployed and managed independently, failure in one service does not affect the entire system, making it more resilient and less prone to downtime.

- Flexibility: microservices can be developed and deployed using different programming languages and technologies, which allows organizations to choose the best tool for the job and to adapt to changes in technology over time.

- Easier maintenance and updates: code changes are smaller and less complex than with a monolithic application and are easy to roll back in case of failure. This results in an improved ability to experiment and faster time-to-market.

#3: What kinds of workloads are not suited for the cloud?

- Latency-sensitive applications with stringent performance requirements may not be suitable for the cloud. As the data has to travel over the network to the cloud servers, applications in which low latency, high bandwidth, and real-time processing are crucial may rely instead on edge computing. (Edge computing brings computation and storage closer to the data sources to enable processing at more incredible speeds and volume.)

- Applications with high data sovereignty requirements. In certain domains, apps that store or process sensitive data may have regulatory or compliance requirements to be stored on-premises or in a third-party, non-public data center

- Applications with strict reliability or performance requirements may not be suitable for the cloud. It’s impossible to guarantee 100% uptime in a shared, multi-tenant environment, and legacy workloads may not have been architected to run in a distributed computing environment.

Source: cybersecurity.att.com

- Applications with heavy resource utilization (i.e. large amounts of CPU, memory, or storage resources) may be more cost-effective to run on-premises or in a dedicated environment.

- Applications with specialized hardware requirements may not be suitable for the cloud as the necessary resources may not be available or may be cost-prohibitive. However, it’s worth noting that cloud vendors continue to improve the specialized cloud environments they offer for different types of workloads.

#4: What are the benefits of cloud orchestration? How do you approach cloud orchestration?

Cloud orchestration is the automation of cloud resources management and deployment processes. It is increasingly important as the rise of containerized, microservices-based applications and multi-cloud environments have led to increased complexity. Its benefits include:

- Cost management: improving the efficiency of resource utilization and provision as needed, detecting and eliminating superfluous resources, reducing the need for IT administrators

- Improved integration: bridging the gap between clouds or between public and private environments

- Increased Reliability: automated failover and disaster recovery processes enabled by cloud orchestration can improve system availability and reduce downtime.

- Enhanced collaboration: with a single source of truth dashboards to share data across all relevant teams (such as IT operations, security, etc.)

- Better security: resulting from the ability to automatically and continuously scan for vulnerabilities and test for compliance

You can also listen for answers that discuss the concrete use of cloud orchestration tools such as CloudFormation, Ansible, Terraform, and Kubernetes.

#5: Can you explain the principles of Infrastructure as Code (IaC)?

Infrastructure as Code (IaC) is a methodology for managing and provisioning IT infrastructure through code rather than manual processes. Its principles include:

- Version Control: all code and configurations used to manage infrastructure should be stored in a version control system to track changes, provide a clear history of the infrastructure, and be able to roll back to previous states if necessary

- Idempotence: multiple runs of the same code should result in the same infrastructure state to simplify infrastructure provisioning and make it more reliable and consistent

- Immutability: changes are made by creating new resources rather than modifying existing ones. This helps prevent configuration drift and promotes scalability

- Testing: Checking continually at the lowest possible level to reduce the risk of production issues.

- Reusability: Code and configurations should be reusable and modular to promote efficiency and consistency and to mitigate the cost of failure

Advanced interview questions: from cloud services to cloud computing architecture

Regarding advanced-level cloud engineer interview questions, answers to questions will vary. We’ve outlined some general concepts that cloud engineers will likely touch upon, but the most important thing is to listen for specific tools used, problems solved and results achieved.

#1: What are some of the biggest challenges facing the cloud computing industry today?

While the answer to this question will vary, you should listen for answers that demonstrate broad expertise in the cloud computing industry, knowledge of recent cloud computing issues and trends, big-picture critical thinking when it comes to business problems, and creative problem-solving skills.

A few topics candidates may reference include:

- Rising costs for state-of-the-art cloud systems and cloud cost optimization, and multi-cloud sprawl

- Integrating AI/ML technologies into cloud computing

- Emerging cloud security challenges targeting IP addresses, VPNs, OT systems, etc.

- Adoption of serverless computing models

- Increased government regulation around data privacy, security, etc.

#2: How do you design and implement a highly scalable and available cloud architecture?

According to the most recent version of the Google Cloud Architecture Framework, some ways to design cloud computing architecture for scale and high availability include:

- Creating redundancy with replication across multiple domains and no single point of failure

- Multi-zone cloud architecture implementation with load balancing and automated failover between zones

- Eliminate scalability bottlenecks, such as by scaling horizontally with sharding, or partitioning, across VMs or zones

- Degrade service levels gracefully when overloaded rather than failing completely

- Prevent and mitigate traffic spikes in cloud computing architecture with techniques such as throttling, queuing, load shedding, circuit breaking, and prioritizing critical requests

#3: What cloud monitoring tools do you use and why?

Some popular cloud monitoring tools include:

- Amazon CloudWatch

- Google Stackdriver

- Azure Monitor

- Datadog

- New Relic

- Nagios

- Dynatrace

- Sumo Logic

- SolarWinds

- Zabbix

#4: Can you walk me through one of the cloud computing projects you’re most proud of, that you oversaw from ideation to implementation?

Though this question may seem simple, having a candidate talk through a cloud computing project is an excellent way to gauge their overall experience level and give insight into their thought process.

Whom did they work with? What were the problems they were solving? What was their approach? How did they handle bottlenecks and setbacks in the development process? What did they learn — was there anything they could have done better, or did they pick up a new language, technology, or skill?

Great answers will reflect the use of metrics to measure success, incorporation of feedback, and a focus on results and overall business impact.

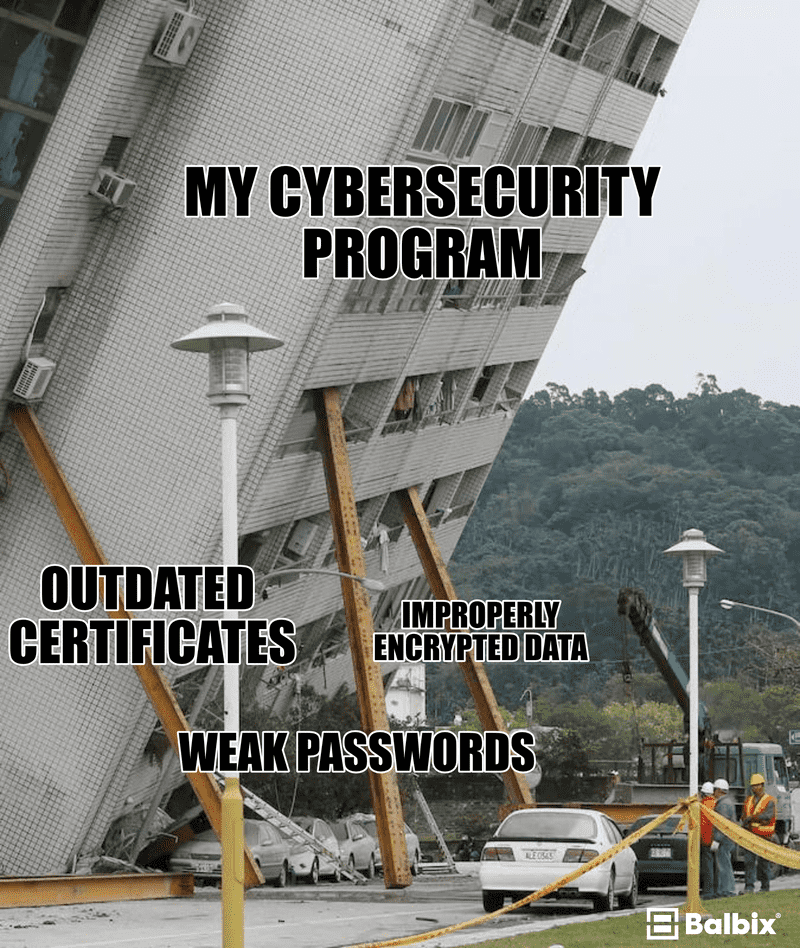

Cloud security engineer interview questions

With cloud security named one of 2023’s top threats by executives, here are some cloud security engineer interview questions and answers for vetting cybersecurity professionals.

#1: What risks are associated with working with an external cloud provider?

- Compliance: cloud service providers may not meet the specific regulatory requirements of your industry, which could result in non-compliance issues and legal penalties. In specific industries, a private cloud may be preferred.

- Security: in multi-tenant cloud architecture, your applications and data exist on the same servers as other business management users employing the same service. If one of those companies’ applications is breached or attacked by a virus, your resources may be affected.

- Vendor Lock-in: moving to a different cloud service provider can be challenging and expensive and may require re-architecting applications and systems.

- Visibility: in many cloud computing environments, you may not see what your provider is doing. You may be unable to verify that they comply with regulations, for example, or that their employees have been thoroughly vetted.

- Cost Overruns: cloud computing service costs may risk exceeding budget projections, or unexpected charges may be incurred

#2: What are some cloud computing attacks?

- DDoS attacks: distributed denial of service attacks to overload cloud infrastructure with high volumes of traffic to disrupt cloud services

- Session hijacking attacks: including session sniffing, client-side attacks, man-in-the-middle attacks, and man-in-the-browser attacks

- Phishing attacks: using social engineering to steal cloud credentials or trick users into installing malware

- Injection attacks: to exploit cloud infrastructure vulnerabilities to inject code into applications to execute remote commands

- Misconfiguration attacks as a result of insecure configurations

#3: Can you discuss Identity and Access Management in cloud computing? (IAM)

Identity Management enables organizations to manage and control access to cloud computing resources, sensitive data, and other IT services.

In cloud computing, Identify Management enables organizations to control access to resources and applications such as virtual machines, databases, and storage containers. This includes defining roles and permissions for users, setting up multi-factor authentication, and tracking and auditing user activity.

#4: Can you explain the differences between encryption in transit, encryption at rest, and encryption of data in use?

Encryption in transit protects data as it travels over a network, such as an internet, from one location to another. The data is encrypted during transmission (through HTTPS or SSL/TLS) to prevent tampering or eavesdropping.

Encryption at rest protects data stored on a physical device or cloud environment. The data is encrypted to be unreadable without the correct decryption key (in case the device or system is lost or stolen).

Encryption of data in use protects data that is being processed, such as when it is being loaded into memory or modified in an application

#5: What are some parameters you should consider when assessing your cloud vendor?

When it comes to ensuring cloud service providers meet your security requirements, you might consider some questions like the following:

- What kinds of companies do they currently service? How do they handle multi-tenancy?

- Does the vendor comply with cloud computing security and privacy standards, such as ISO 27001, SOC 2, or PCI DSS?

- Where will your data be stored, and who will access it?

- What kinds of security measures do they have in place, whether virtual (firewalls, encryption) or physical (guards, barriers)?

- Do they have incident response plans, data backup plans, and other plans for crises?

source: balbix.com

AWS cloud engineer interview questions

#1: What are the key benefits of AWS versus other cloud service providers?

AWS is the largest and most mature cloud service provider, with the greatest market share and resources.

It has the most extensive range of services and solutions, a strong focus on open-source technology, and support for various programming languages, databases, and tools

#2: Can you explain the concept of AWS regions and availability zones?

Amazon’s EC2, or cloud computing capacity service, is hosted in multiple locations worldwide. These locations are composed of:

- AWS Regions are geographic locations where AWS operates Availability Zones (AZs) or physically isolated data centers. Each region is designed to be isolated from failures in other regions, with independent power, cooling, and network connectivity. Thanks to AZs, AWS can provide high levels of redundancy and fault tolerance, resulting in low latency, high throughput performance, and protection against data loss.

- Local Zones provide the ability to place resources such as computing and storage in locations closer to your end users

- AWS Outposts allow customers to run AWS infrastructure on-premises in their data centers

- Wavelength Zones allow customers to run compute and storage services on the edge of the 5G network, close to users and devices, for low-latency and high-bandwidth experiences.

#3: Can you compare Amazon S3, EBS, and EFS?

While Amazon S3 (Simple Storage Service), EBS (Elastic Block Storage), and EFS (Elastic File System) are all storage services, they are designed for different use cases.

- Amazon S3 is an object storage service that can be used for many data storage scenarios, such as data lakes, websites, mobile applications, backup and restore, archive, enterprise applications, IoT devices, and big data analytics. It is designed for high durability and availability and is suitable for storing significant and long-term data.

- Amazon EBS is a block storage service. It stores data for use with single EC2 instances (i.e., virtual machines that run in the AWS cloud). EBS can be used as primary storage for applications, as well as for database storage.

- Amazon EFS is a file storage service that can store data accessible to multiple EC2 instances simultaneously. It is designed for use cases that require shared file storage, such as big data analytics, content management systems, and application development. EFS can scale automatically to accommodate growth in data storage needs.

#4: Can you explain the differences between Amazon EC2 instance types?

Here are some of the different EC2 instance types:

- General Purpose: well-suited for general-purpose applications that require a balance of computing, memory, and I/O performance. Some use cases include network-intensive workloads like backend servers, enterprise, and gaming servers. Examples: t2, m5, and m6 families

- Compute Optimized: designed for compute-intensive applications that require high CPU performance, such as batch processing workloads, media transcoding, and high-performance web servers. Examples: c5 and c6

- Memory Optimized: for applications that require high memory performance. Use cases include relational database workloads with high per-core licensing fees and financial, actuarial, and data analytics simulation workloads. Examples: r5 and x1

- Storage Optimized: designed for workloads that require high, sequential read and write access to extensive data sets on local storage. They are good for workloads that require high compute performance and high throughput or workloads that require fast access to medium size data sets on local storage, such as search engines and data analytics workloads. Examples: d2, h1

Candidates might also mention Accelerated Computing instances, HPC Optimized instances, GPU instances, ARM instances, and other specialized instances.

#5: Can you compare Amazon ECS, EC2, and EKS?

Elastic Container Service (ECS) is a fully managed container orchestration service that allows customers to run, manage, and scale Docker containers without worrying about the underlying infrastructure.

Elastic Compute Cloud (EC2) provides scalable cloud computing capacity. It can also be used to provision Kubernetes clusters.

Elastic Kubernetes Service is a fully managed Kubernetes service with a highly available and scalable Kubernetes control plane

Eucalpytus (Elastic Utility Computing Architecture) is an open-source cloud technology platform for building private and hybrid cloud computing environments.

#6: How do you monitor the performance of your AWS infrastructure?

While the choice of monitoring tools will depend on the requirements of the infrastructure (size, complexity, etc.), some standard monitoring tools include:

- Amazon CloudWatch is AWS’s primary monitoring service. It allows customers to monitor various metrics and logs related to their infrastructure.

- Amazon CloudTrail allows customers to monitor API calls made to their AWS infrastructure

- AWS Trusted Advisor provides recommendations for optimizing the performance, security, and cost of AWS resources

- Third-party monitoring tools such as Datadog, Nagios, New Relic, and nOps

Azure cloud engineer interview questions

#1: What are the key benefits of Azure versus other cloud service providers?

Azure integrates well with Microsoft’s ecosystem of products and services (which may be necessary for enterprises with a significant investment in Microsoft technology).

It also has the best support for deploying and managing hybrid cloud architecture and is one of the fastest-growing cloud providers.

#2: Can you explain the purpose and use of Azure’s load-balancing services?

Load balancing refers to the distribution of workloads across multiple computing resources, reducing the loan on individual resources and improving performance.

Azure offers these primary services for load balancing:

- Front Door: offers Layer 7 capabilities like SSL offload, path-based routing, fast failover, catching, etc., to improve performance and availability

- Traffic Manager: DNS-based load balancing service that enables the optimal distribution of traffic across global Azure regions

- Application Gateway: provides application delivery controller (ADC) as a service, used to optimize farm productivity by offloading CPU-intensive SSL termination to the gateway

- Azure Load Balancer: high-performance ultra-low-latency Layer 4 load-balancing service (inbound and outbound) for all UDP and TCP protocols

#3: Can you describe how you would set up an auto-scaling solution on Azure?

The first step in setting up auto-scaling is to define and input the criteria that will trigger an Azure Monitor Alert. This could be based on factors such as CPU utilization or network traffic.

Then, you create a scaling action, such as increasing or decreasing the number of virtual machines in a scale set, that will be taken in response to the alert. You also configure the scaling rules determining when and how the scaling action will occur.

Finally, you test the auto-scaling solution to ensure it works correctly and that the scaling criteria, alerts, and actions are appropriately configured and deploy it to your production environment.

#4: Can you describe the steps to migrate an on-premises application to Azure?

Primary and intermediate answers to this question could discuss broad patterns and best practices for migrations, such as rehosting, refactoring, rearchitecting, and rebuilding.

An advanced answer will likely get more granular about the detail and concrete steps required to migrate web applications from on-premise to Azure.

Google Cloud Engineer Interview Questions

#1: What are the key benefits of GCP versus other cloud providers?

GCP is often considered the cheapest provider of cloud computing services, though prices have leveled out over time.

GCP has a strong focus on data analytics and machine learning solutions. It was also found to have the best throughput performance by a recent study.

#2: What is the difference between Google Compute Engine and App Engine?

Google Compute Engine is a cloud-based IaaS offering. It gives users complete control over their operating system, network, and storage of their VMs.

Google App Engine is a cloud-based PaaS offering that provides users with a managed environment for building and running web applications (and Google manages the underlying infrastructure). It gives users less control but increased the ease and speed of development.

#3: Can you explain the use of Google Cloud SQL for MySQL and how it differs from a traditional database setup?

In a traditional database setup, customers have to manage the provisioning and maintenance of the servers, backups, and other infrastructure needs themselves. However, by using Google Cloud SQL, database scalability, availability, and security are all handled by Google.

Cloud service models also differ on pricing, as Google Cloud SQL operates on a pay-as-you-go cloud computing model (in contrast to the traditional model of investing initially in hardware, software, and infrastructure upkeep).

/cdn.vox-cdn.com/uploads/chorus_asset/file/9520337/Screen_Shot_2017_10_23_at_4.31.28_PM.png)

source: theverge.com

#4: Can you explain the use of Google Cloud DNS for managing domain names?

Google Cloud DNS is a Domain Name System (DNS) that publishes your domain names to the global DNS. A DNS is a hierarchical distributed database that lets you store IP addresses and other data and look them up by name. Cloud DNS lets you publish your zones and records in DNS without the burden of managing your DNS servers and software.

Cloud DNS offers both public zones and privately managed DNS zones. It also supports Identity and Access Management (IAM) permissions at the project level and individual DNS zone level.

#5: How do you build and deploy Google Cloud Functions?

Google Cloud Functions allows you to run single-purpose, short-lived functions in response to events and automatically manages the infrastructure required to run them. While more advanced answers will dive into the specifics of building and deploying cloud functions, on a high level, the process involves:

Choosing a development environment, whether local or in the cloud, using the Google Cloud Console, the gcloud command-line tool, or an integrated development environment (IDE) such as Visual Studio Code.

Next, you write the function code.

You need to determine a trigger or event that initiates the execution of the function. Examples include HTTP requests, changes in a Cloud Storage bucket, or new messages in a Pub/Sub topic.

Finally, deploy the function using a CI/CD tool like Cloud Build.

Wrapping Up:

Celential’s sourcing solution leverages a vast talent network of 15M+ tech candidate profiles to deliver top-quality talent with zero effort or learning curve on your part. It takes just three days on average to receive warm candidates ready to interview.

Through the power of cutting-edge AI and ML, our Virtual Recruiter will find the best matches and engage candidates at scale — even for specialized roles like Cloud Engineers, Cloud Security Engineers, and AWS engineers (and other engineering roles such as ML Engineer, Data Scientist, Fullstack Developer, Backend Developer, Frontend developer, DevOps Engineers, Engineering Managers, and more).

With your recruiting team’s time freed up to nurture and engage candidates, you’ll soon close hires for this competitive role.

Schedule a call with one of our product experts to find out how to receive tech and AI talent for up to 50% less cost!

Table of Contents

Submit a Comment